Debug - Killer Exergame on Android

Objective

Build a totally addictive novel exergame for kids (and possibly adults too) that uses motion sensing to keep the kids engaged in a very high level of physical activity for long periods of time.

Overview and Challenges

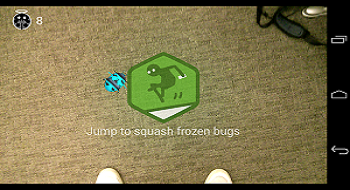

This is the final project for Mobile Application Development (CS5800) course. The basic idea of our game is that people hold their phones with back-cameras facing to the ground. They will see some bugs crawling in the real-time camera screen. They can kill these bugs with their feet by burning/squashing them in the game. There are four main challenges: 1. Develop robust computer vision algorithms to detect distinct color on shoes and directions/speed during users' walking. 2.Use OpenGL to render a corresponding 3D game world. 3. Leverage accelerometer sensors to detect jumping. 4. We (two people) only use 1 month to develop the application.

The Journey

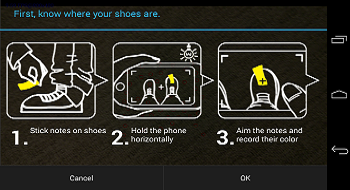

The game design took us as much time as programming. There are so many goals we want to achieve in the application. First, since it is an exergame, players are supposed to get enough sweat by playing it. So we introduce three different kinds of motions to play the game: walking (for chasing bugs), jumping (for squashing bugs) and shaking (for freezing bugs). Motion varieties are designed on purpose to make the game stickier. Second, it is natural for people to squash bugs when they see them. Due to this nature, we design the game with bugs instead of other game backgrounds. Third, bugs must appear and interact with players in realistic manners. We develop an interesting algorithm that makes sure bugs will bounce when they hit the edges on the floor. And they now could halt on and off just like real bugs on the ground. Fourth, a good game should be easy to learn how to play. We develop built-in step-by-step tutorials and succinct instructions in both images and texts. Our future tasks include integrating social network sharing and a multiplayer mode for competition. As far as we know, the application is the only one, at least among very few other applications, that combines augmented-reality and mHealth.

Project Info

Collaborators: Shang Ma, Shaojie Wang(UI)

Languages & Tools: Java, C, Eclipse, MAT(Memory Analyzer)

Resources:

Final Presentation PPT,

Project Proposal,

Google Play Store

Timeline: Nov 2013 - Dec 2013

Mobile Service Awareness Platform

Objective

The mobile Internet awareness platform provides operators, mobile service developers and operation&maintenance personnel with visualized data about mobile services' QoS. Users can clearly observe the health conditions of their application or websites through various models, such as Timeline and Map View. Also, the platform will diagnose problems in mobile services that deteriorate User Experience implicitly and explicitly.

Overview and Challenges

Mobile Internet will be a huge trend in the future. Different from traditional networking tests, the mobile Internet awareness platform launches tests from the user perspective. That means, it collects test results while opening websites or using applications through emulating real users' behaviors.

The Journey

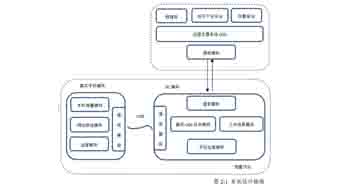

This project lasted the whole year of my senior and was chosen as my undergraduation project. The whole system and its demo project are designed and developed by myself. The system consists of two components: measurement nodes and Operation Support System (OSS). In a measurement node, mobile devices are connected with a PC end(Runner Server) via USB. Through the communication with Runner Servers, OSS will manage the whole group of mobile devices and dispatch measurement tasks to any of them available. The mobile test devices are able to automatically open websites or use applications according to test scripts. There are two types of measurement schemes: measurement for flow test and measurement for optimization. In a flow test, the system will test if every key procedure is finished in a timely threshold. In an optimization test, I introduce about ten metrics that may affect the user experience negatively in an implicite or explicite way. In the demo project, we integrated several tools in Android SDK to achieve the active measurement module, such as Hierarchy Viewer and Robotium. I used Java to implement Runner Server’s modules. As for the communication between Runner Server and task creating pages, I adopt the Websocket technology, a new feature introduced in HTML5. Result pages could not only show basic parameters in traditional tests but also demonstrate QoS in rich formats like maps, timeframe and historical timeline.

Project Info

Advisor: Yin Hao

Languages & Tools: HTML5, CSS3, JavaScript(JQuery), Java, Dreamweaver CS5.5, Eclipse, Android SDK

Resources:

Codes' host,

Project Thesis

Timeline: July 2012 - July 2013

Virtual Costumes-Trial System

Objective

To design and build a virtual costumes-trial system to eliminate the worry of purchasing unsuitable clothes online.

Overview and Challenges

With the rapid development of e-commerce, a large amount of consumers have chosen to purchase clothes online. However, it has always been a tough decision for consumers to make purchases of clothes online without knowing their lookings with the clothes on. In order to eliminate such a worry, we have designed a virtual costumes-trial system based on 3D body modeling. Challenges are significant because we need to consider every aspect both on the level of hardware and software.

The Journey

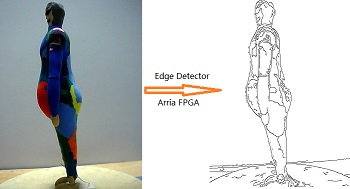

We always put the user’s experience in the first place. After several brainstormings, we held that simplicity, convenience and universality would greatly improve user’s experience. Firstly, our team chose the Intel Atom E6x5C with built-in FPGA of Arria Company as our embedded system's processor, whose superior performance made possible the quick data collection and processing. In terms of camera, we chose to use prevailing USB cameras, which allowed most users to obtain the data more conveniently. I implemented the Canny algorithm on the FPGA, which could quickly obtain edge profiles of users' bodies. Moreover, I used processed body information to form 3D human model accurately by harnessing powerful Java 3D APIs. In the project demo, we prepared several skins of different clothes. When we attached the clothes skins to a built human model, a 360 degree panoramic perspective was possible to observe the effect of trying the clothes on.

Project Info

Collaborators: Yunbing Ding, Xiaopeng Bi, Jinming Ma

Languages & Tools: Verilog, C, Java, Quartus II, Eclipse, 3DMax

Resources:

Final Report,

Final Report,

Presentation PPT

Timeline: March 2012 - July 2012

Pitch Match - Practice Assistant for Music Beginners

Objective

Our goal was to design an app for music rookies as a professional robot-teacher when their music teachers are absent.

Overview and Challenges

Innotation, rhythm and sound are three main problems tormenting beginners of playing instruments. When lacking of the on-site instruction of professional music teachers, beginners even don't know where goes wrong during their playing. Pitch Match is a smart app on Android, serving as a professional pitch/rhythm correction assistant for music beginners. Practicing playing instruments is no longer expensive, tedious and difficult! Designing a friendly but professional interface as well as accurate FFT filters is challenging.

The Journey

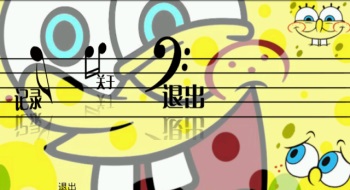

At first, I designed and simulated a FFT filter to extract frequencies of pitches, which generated accurate outputs under the ideal condition. However, when I integrated the FFT filter into our app running on a real phone, the problem emerged because the environmental noise did affect a lot. So I added a module functioning as a collector of environmental noise into the app, mading it more intelligent to filter useless sound frequencies. Another challenge is leveraging the processing speed of normal smart phones and the accuracy of FFT filter. Considering that most frequencies of music pitches are less than 5000Hz, I chose to use a 2048-point FFT filter to ensure the identification accuracy and the smooth operation of the application. After that, my teammates and I devoted to furnish the UI. The elaborated statistics forms and easy-to-use sliding menu were both indispensable elements. We also designed several skins for the application.

Project Info

Collaborators: Shaojie Wang, Yajie Zhang

Languages & Tools: Java, Matlab, Eclipse, Photoshop CS5, Android SDK

Resources:

APK File,

Source Code

Timeline: Oct. 2011 - Mar. 2012

MemoQuiz - Game App Development

Objective

To design and develop an appealing memory test game app.

Overview and Challenges

The biggest challenge is to distinguish my app from a variety of apps.

The Journey

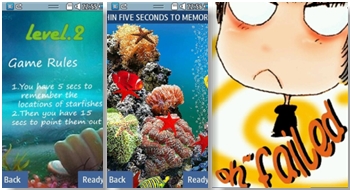

The experience is the milestone of my interest in HCI. I participated in the Samsung App Development Competition in my campus when it was my sophomore. At that time, I had neither experience of object-oriented programming beforehand nor ideas about UX and UI design. I started to delve into Java programing with the extreme excitement to make my first app. The game itself was simple. However, I made it sticky through designing hierarchical missions and adding appealing animations. After three months’ constructing, modifying and debugging, my efforts eventually paid off. My first mobile app was successfully approved by the Samsung App Store and until now there have been nearly 40 thousand downloads.

Project Info

Collaborators: Shaojie Wang, Wentao Zhao, Yunbing Ding

Languages & Tools: Java, Eclipse, Photoshop, Bada IDE

Resources: Source Code

Timeline: Sept. 2010 - March 2011